Vinted Vitess Voyage: Chapter 4 - Autumn Strikes Back

This is the fourth in a series of chapters sharing our Vitess Voyage story. After a rough ‘Vinted Autumn’, this time we came to the conclusion that vertical sharding was no longer an option.

The Voyage of 2022

The first horizontally-sharded keyspace

The Vitess documentation and community already provides a significant amount of information about horizontal sharding. I’ll just share the most interesting part of our own sharding.

As luck would have it, the aforementioned table in the previous chapter served a simple use case and not a lot of different queries were issued to it.

Picked user_id column as a sharding key after basic manual query column usage inspection. Some parts of queries were fixed by just adding an additional predicate with user_id. Around 8% of queries were using primary key id and 2% other columns. Due to their inconsequential rate and importance, we decided to let them scatter anyway.

As the saying goes, “Rome wasn’t built in a day”. We had to put in even more elbow grease:

- Rerun all queries through

vtexplain+EXPLAIN FORMAT=VITESSto warrant correct query routing - Train ourselves: replay, break and recover resharding process

- Modify migrations to support horizontal shards and test/break/recover (our own tasks using gh-ost)

- Custom auto-injection of primary

VIndexcolumn predicate into appropriate queries. Just recently, we noticed a feature got into a future Rails version. - Teach developers to work with horizontal Vitess shards.

First contribution

Anyhow, manual query parsing was certainly not going to cut it for use. vtgate instances logged queries with shard, vttablet type and used table tags, but after a second look the bulk of them had incorrect table tags. Additionally, they did not have any client connection identification whereas the MySQL general log would contain such information. Besides, the Vitess version we used had just fallen out of support. Implementing such features might take months, or even longer. Yet, ‘Vinted Autumn’ was coming and there were other more complicated candidates for horizontal sharding.

With the help of Andres Taylor from PlanetScale, we added client session UUID and some missing table extraction to the query logs. Additionally, we flagged queries if they were in transaction, which greatly improved query analysis. Then, of course the matter of backporting was left.

Vitess later improved table and keyspace extraction quite a bit in v15

Vitess upgrade

With more time available, upgrade testing from v8 to v11 with additional backport fixes was started on our same Vitess test cluster. It contained a great deal of improvements. Most notably:

- Golang upgrade from 1.13 to 1.16 resulted in 20-50% garbage collection time improvement for different components.

- More performant Cache Implementation for query plans using LFU eviction algorithm.

- ProtoBuf APIv2 and custom Protocol Buffers compiler. Interesting Vitess blog post about how they got there.

Movetablesv2 - less manual cleanups, progress status, less overhead.- Throttle API.

- Comparing v8 vs v11 with the same workload test results were outright great:

- Query latency median (p50) was 0.3% lower for main application queries and 3.4% for job queries

- Latency p95 was 19.6% lower for main application and 19.3% for jobs.

vtgategarbage collection time reduced by ~20% andvttabletby ~50%- Though, a small resource usage increase (used up to ~2% more of CPU and up to ~4% more of memory)

Semi-sync + replicas by the dozen

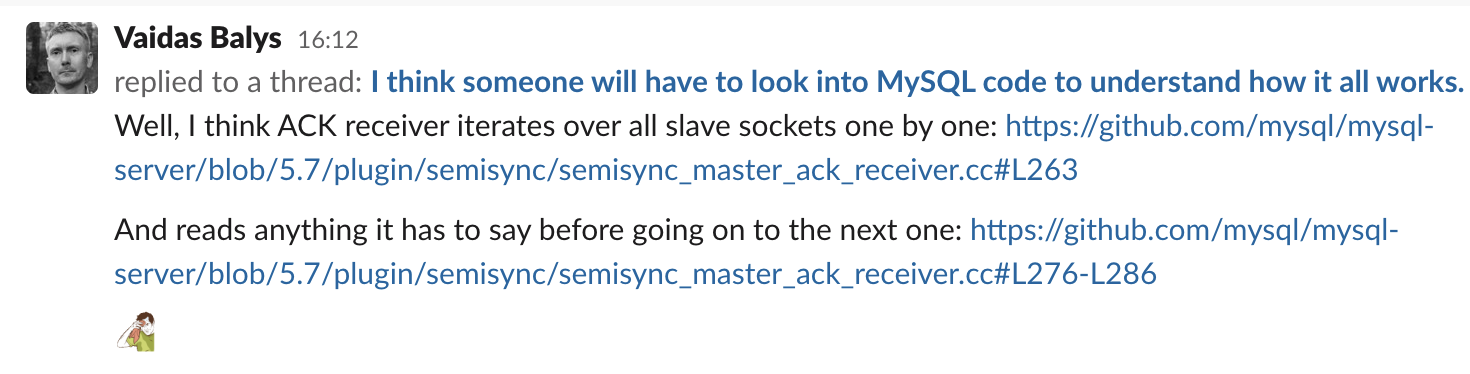

With an unexpected geopolitical situation escalating in February 2022 and a danger lurking around Lithuania, it was time to deploy a second region with replicas. Initially, all was fine, but some shards had more than a dozen replicas. Since we use semi-sync replication, a perplexing issue creeped in beside inter-region latency. It really deserves a blog post on its own. Based on documentation and our configuration, the primary writes the data on binlog and waits rpl_semi_sync_master_timeout=2147483646 milliseconds (~4 minutes) to receive an acknowledgment from rpl_semi_sync_master_wait_for_slave_count=1 semi-sync enabled replicas about them having received the data (Fig. 1). We could not control the order in which replicas were asked for confirmation.

Figure 1: Semi sync problem

Latency of multiple remote round trips increased the duration of transactions a lot and filled up vttablet transaction pools quickly causing short outages. In the end, we decided to leave up to 2 replicas with semi-sync enabled in the primary region. All other replicas had semi-sync disabled.

There are numerous posts about semi-sync shortcomings, which I recommend you read:

- MySQL semi-sync replication: durability, consistency and split brains

- Face to Face with Semi-Synchronous Replication.

Autumn 2022

The new patched v11 was running everything fine and dandy. We did occasional vertical sharding, switched some read traffic to replicas. App periodic jobs write enthusiasm was curbed by throttle API. We felt ready as ever. But one does not simply dodge ‘Vinted Autumn’. vtgate metrics showed a total of 1M+ QPS. Most critical keyspaces had reached vertical scaling limits. The déjà vu of partial weekly downtimes at peak times kept us awake for several weeks. To say that the problems to solve for SRE and Platform teams alone were complex was in all respects an understatement. We had to get immediate help from product teams.

“Performance Task Force, Assemble!”

One of our Vinted cultural features is picking peculiar and contemporary names for teams. Performance Task Force has been assembled from “S-Class” engineers. After the initial one-day conference, the necessary confidence to shard horizontally was there.

Oh boy, I can’t wait to tell you the story of how it winds-up! :)

“Engage.” - Jean-Luc Picard, Star Trek: The Next Generation